Machine Learning Street Talk (MLST)

Machine Learning Street Talk (MLST)

2 Listeners

All episodes

Best episodes

Seasons

Top 10 Machine Learning Street Talk (MLST) Episodes

Goodpods has curated a list of the 10 best Machine Learning Street Talk (MLST) episodes, ranked by the number of listens and likes each episode have garnered from our listeners. If you are listening to Machine Learning Street Talk (MLST) for the first time, there's no better place to start than with one of these standout episodes. If you are a fan of the show, vote for your favorite Machine Learning Street Talk (MLST) episode by adding your comments to the episode page.

Eliezer Yudkowsky and Stephen Wolfram on AI X-risk

Machine Learning Street Talk (MLST)

11/11/24 • 258 min

Eliezer Yudkowsky and Stephen Wolfram discuss artificial intelligence and its potential existen‐

tial risks. They traversed fundamental questions about AI safety, consciousness, computational irreducibility, and the nature of intelligence.

The discourse centered on Yudkowsky’s argument that advanced AI systems pose an existential threat to humanity, primarily due to the challenge of alignment and the potential for emergent goals that diverge from human values. Wolfram, while acknowledging potential risks, approached the topic from a his signature measured perspective, emphasizing the importance of understanding computational systems’ fundamental nature and questioning whether AI systems would necessarily develop the kind of goal‐directed behavior Yudkowsky fears.

***

MLST IS SPONSORED BY TUFA AI LABS!

The current winners of the ARC challenge, MindsAI are part of Tufa AI Labs. They are hiring ML engineers. Are you interested?! Please goto https://tufalabs.ai/

***

TOC:

1. Foundational AI Concepts and Risks

[00:00:01] 1.1 AI Optimization and System Capabilities Debate

[00:06:46] 1.2 Computational Irreducibility and Intelligence Limitations

[00:20:09] 1.3 Existential Risk and Species Succession

[00:23:28] 1.4 Consciousness and Value Preservation in AI Systems

2. Ethics and Philosophy in AI

[00:33:24] 2.1 Moral Value of Human Consciousness vs. Computation

[00:36:30] 2.2 Ethics and Moral Philosophy Debate

[00:39:58] 2.3 Existential Risks and Digital Immortality

[00:43:30] 2.4 Consciousness and Personal Identity in Brain Emulation

3. Truth and Logic in AI Systems

[00:54:39] 3.1 AI Persuasion Ethics and Truth

[01:01:48] 3.2 Mathematical Truth and Logic in AI Systems

[01:11:29] 3.3 Universal Truth vs Personal Interpretation in Ethics and Mathematics

[01:14:43] 3.4 Quantum Mechanics and Fundamental Reality Debate

4. AI Capabilities and Constraints

[01:21:21] 4.1 AI Perception and Physical Laws

[01:28:33] 4.2 AI Capabilities and Computational Constraints

[01:34:59] 4.3 AI Motivation and Anthropomorphization Debate

[01:38:09] 4.4 Prediction vs Agency in AI Systems

5. AI System Architecture and Behavior

[01:44:47] 5.1 Computational Irreducibility and Probabilistic Prediction

[01:48:10] 5.2 Teleological vs Mechanistic Explanations of AI Behavior

[02:09:41] 5.3 Machine Learning as Assembly of Computational Components

[02:29:52] 5.4 AI Safety and Predictability in Complex Systems

6. Goal Optimization and Alignment

[02:50:30] 6.1 Goal Specification and Optimization Challenges in AI Systems

[02:58:31] 6.2 Intelligence, Computation, and Goal-Directed Behavior

[03:02:18] 6.3 Optimization Goals and Human Existential Risk

[03:08:49] 6.4 Emergent Goals and AI Alignment Challenges

7. AI Evolution and Risk Assessment

[03:19:44] 7.1 Inner Optimization and Mesa-Optimization Theory

[03:34:00] 7.2 Dynamic AI Goals and Extinction Risk Debate

[03:56:05] 7.3 AI Risk and Biological System Analogies

[04:09:37] 7.4 Expert Risk Assessments and Optimism vs Reality

8. Future Implications and Economics

[04:13:01] 8.1 Economic and Proliferation Considerations

SHOWNOTES (transcription, references, summary, best quotes etc):

https://www.dropbox.com/scl/fi/3st8dts2ba7yob161dchd/EliezerWolfram.pdf?rlkey=b6va5j8upgqwl9s2muc924vtt&st=vemwqx7a&dl=0

1 Listener

How Machines Learn to Ignore the Noise (Kevin Ellis + Zenna Tavares)

Machine Learning Street Talk (MLST)

04/08/25 • 76 min

Prof. Kevin Ellis and Dr. Zenna Tavares talk about making AI smarter, like humans. They want AI to learn from just a little bit of information by actively trying things out, not just by looking at tons of data.

They discuss two main ways AI can "think": one way is like following specific rules or steps (like a computer program), and the other is more intuitive, like guessing based on patterns (like modern AI often does). They found combining both methods works well for solving complex puzzles like ARC.

A key idea is "compositionality" - building big ideas from small ones, like LEGOs. This is powerful but can also be overwhelming. Another important idea is "abstraction" - understanding things simply, without getting lost in details, and knowing there are different levels of understanding.

Ultimately, they believe the best AI will need to explore, experiment, and build models of the world, much like humans do when learning something new.

SPONSOR MESSAGES:

***

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. They are hiring a Chief Engineer and ML engineers. Events in Zurich.

Goto https://tufalabs.ai/

***

TRANSCRIPT:

https://www.dropbox.com/scl/fi/3ngggvhb3tnemw879er5y/BASIS.pdf?rlkey=lr2zbj3317mex1q5l0c2rsk0h&dl=0

Zenna Tavares:

http://www.zenna.org/

Kevin Ellis:

https://www.cs.cornell.edu/~ellisk/

TOC:

1. Compositionality and Learning Foundations

[00:00:00] 1.1 Compositional Search and Learning Challenges

[00:03:55] 1.2 Bayesian Learning and World Models

[00:12:05] 1.3 Programming Languages and Compositionality Trade-offs

[00:15:35] 1.4 Inductive vs Transductive Approaches in AI Systems

2. Neural-Symbolic Program Synthesis

[00:27:20] 2.1 Integration of LLMs with Traditional Programming and Meta-Programming

[00:30:43] 2.2 Wake-Sleep Learning and DreamCoder Architecture

[00:38:26] 2.3 Program Synthesis from Interactions and Hidden State Inference

[00:41:36] 2.4 Abstraction Mechanisms and Resource Rationality

[00:48:38] 2.5 Inductive Biases and Causal Abstraction in AI Systems

3. Abstract Reasoning Systems

[00:52:10] 3.1 Abstract Concepts and Grid-Based Transformations in ARC

[00:56:08] 3.2 Induction vs Transduction Approaches in Abstract Reasoning

[00:59:12] 3.3 ARC Limitations and Interactive Learning Extensions

[01:06:30] 3.4 Wake-Sleep Program Learning and Hybrid Approaches

[01:11:37] 3.5 Project MARA and Future Research Directions

REFS:

[00:00:25] DreamCoder, Kevin Ellis et al.

https://arxiv.org/abs/2006.08381

[00:01:10] Mind Your Step, Ryan Liu et al.

https://arxiv.org/abs/2410.21333

[00:06:05] Bayesian inference, Griffiths, T. L., Kemp, C., & Tenenbaum, J. B.

https://psycnet.apa.org/record/2008-06911-003

[00:13:00] Induction and Transduction, Wen-Ding Li, Zenna Tavares, Yewen Pu, Kevin Ellis

https://arxiv.org/abs/2411.02272

[00:23:15] Neurosymbolic AI, Garcez, Artur d'Avila et al.

https://arxiv.org/abs/2012.05876

[00:33:50] Induction and Transduction (II), Wen-Ding Li, Kevin Ellis et al.

https://arxiv.org/abs/2411.02272

[00:38:35] ARC, François Chollet

https://arxiv.org/abs/1911.01547

[00:39:20] Causal Reactive Programs, Ria Das, Joshua B. Tenenbaum, Armando Solar-Lezama, Zenna Tavares

http://www.zenna.org/publications/autumn2022.pdf

[00:42:50] MuZero, Julian Schrittwieser et al.

http://arxiv.org/pdf/1911.08265

[00:43:20] VisualPredicator, Yichao Liang

https://arxiv.org/abs/2410.23156

[00:48:55] Bayesian models of cognition, Joshua B. Tenenbaum

https://mitpress.mit.edu/9780262049412/bayesian-models-of-cognition/

[00:49:30] The Bitter Lesson, Rich Sutton

http://www.incompleteideas.net/IncIdeas/BitterLesson.html

[01:06:35] Program induction, Kevin Ellis, Wen-Ding Li

https://arxiv.org/pdf/2411.02272

[01:06:50] DreamCoder (II), Kevin Ellis et al.

https://arxiv.org/abs/2006.08381

[01:11:55] Project MARA, Zenna Tavares, Kevin Ellis

https://www.basis.ai/blog/mara/

1 Listener

Joscha Bach and Connor Leahy on AI risk

Machine Learning Street Talk (MLST)

06/20/23 • 91 min

Support us! https://www.patreon.com/mlst MLST Discord: https://discord.gg/aNPkGUQtc5 Twitter: https://twitter.com/MLStreetTalk The first 10 mins of audio from Joscha isn't great, it improves after.

Transcript and longer summary: https://docs.google.com/document/d/1TUJhlSVbrHf2vWoe6p7xL5tlTK_BGZ140QqqTudF8UI/edit?usp=sharing Dr. Joscha Bach argued that general intelligence emerges from civilization, not individuals. Given our biological constraints, humans cannot achieve a high level of general intelligence on our own. Bach believes AGI may become integrated into all parts of the world, including human minds and bodies. He thinks a future where humans and AGI harmoniously coexist is possible if we develop a shared purpose and incentive to align. However, Bach is uncertain about how AI progress will unfold or which scenarios are most likely. Bach argued that global control and regulation of AI is unrealistic. While regulation may address some concerns, it cannot stop continued progress in AI. He believes individuals determine their own values, so "human values" cannot be formally specified and aligned across humanity. For Bach, the possibility of building beneficial AGI is exciting but much work is still needed to ensure a positive outcome. Connor Leahy believes we have more control over the future than the default outcome might suggest. With sufficient time and effort, humanity could develop the technology and coordination to build a beneficial AGI. However, the default outcome likely leads to an undesirable scenario if we do not actively work to build a better future. Leahy thinks finding values and priorities most humans endorse could help align AI, even if individuals disagree on some values. Leahy argued a future where humans and AGI harmoniously coexist is ideal but will require substantial work to achieve. While regulation faces challenges, it remains worth exploring. Leahy believes limits to progress in AI exist but we are unlikely to reach them before humanity is at risk. He worries even modestly superhuman intelligence could disrupt the status quo if misaligned with human values and priorities. Overall, Bach and Leahy expressed optimism about the possibility of building beneficial AGI but believe we must address risks and challenges proactively. They agreed substantial uncertainty remains around how AI will progress and what scenarios are most plausible. But developing a shared purpose between humans and AI, improving coordination and control, and finding human values to help guide progress could all improve the odds of a beneficial outcome. With openness to new ideas and willingness to consider multiple perspectives, continued discussions like this one could help ensure the future of AI is one that benefits and inspires humanity. TOC: 00:00:00 - Introduction and Background 00:02:54 - Different Perspectives on AGI 00:13:59 - The Importance of AGI 00:23:24 - Existential Risks and the Future of Humanity 00:36:21 - Coherence and Coordination in Society 00:40:53 - Possibilities and Future of AGI 00:44:08 - Coherence and alignment 01:08:32 - The role of values in AI alignment 01:18:33 - The future of AGI and merging with AI 01:22:14 - The limits of AI alignment 01:23:06 - The scalability of intelligence 01:26:15 - Closing statements and future prospects

1 Listener

Francois Chollet - ARC reflections - NeurIPS 2024

Machine Learning Street Talk (MLST)

01/09/25 • 86 min

François Chollet discusses the outcomes of the ARC-AGI (Abstraction and Reasoning Corpus) Prize competition in 2024, where accuracy rose from 33% to 55.5% on a private evaluation set.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments.

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. Are you interested in working on reasoning, or getting involved in their events?

They are hosting an event in Zurich on January 9th with the ARChitects, join if you can.

Goto https://tufalabs.ai/

***

Read about the recent result on o3 with ARC here (Chollet knew about it at the time of the interview but wasn't allowed to say):

https://arcprize.org/blog/oai-o3-pub-breakthrough

TOC:

1. Introduction and Opening

[00:00:00] 1.1 Deep Learning vs. Symbolic Reasoning: François’s Long-Standing Hybrid View

[00:00:48] 1.2 “Why Do They Call You a Symbolist?” – Addressing Misconceptions

[00:01:31] 1.3 Defining Reasoning

3. ARC Competition 2024 Results and Evolution

[00:07:26] 3.1 ARC Prize 2024: Reflecting on the Narrative Shift Toward System 2

[00:10:29] 3.2 Comparing Private Leaderboard vs. Public Leaderboard Solutions

[00:13:17] 3.3 Two Winning Approaches: Deep Learning–Guided Program Synthesis and Test-Time Training

4. Transduction vs. Induction in ARC

[00:16:04] 4.1 Test-Time Training, Overfitting Concerns, and Developer-Aware Generalization

[00:19:35] 4.2 Gradient Descent Adaptation vs. Discrete Program Search

5. ARC-2 Development and Future Directions

[00:23:51] 5.1 Ensemble Methods, Benchmark Flaws, and the Need for ARC-2

[00:25:35] 5.2 Human-Level Performance Metrics and Private Test Sets

[00:29:44] 5.3 Task Diversity, Redundancy Issues, and Expanded Evaluation Methodology

6. Program Synthesis Approaches

[00:30:18] 6.1 Induction vs. Transduction

[00:32:11] 6.2 Challenges of Writing Algorithms for Perceptual vs. Algorithmic Tasks

[00:34:23] 6.3 Combining Induction and Transduction

[00:37:05] 6.4 Multi-View Insight and Overfitting Regulation

7. Latent Space and Graph-Based Synthesis

[00:38:17] 7.1 Clément Bonnet’s Latent Program Search Approach

[00:40:10] 7.2 Decoding to Symbolic Form and Local Discrete Search

[00:41:15] 7.3 Graph of Operators vs. Token-by-Token Code Generation

[00:45:50] 7.4 Iterative Program Graph Modifications and Reusable Functions

8. Compute Efficiency and Lifelong Learning

[00:48:05] 8.1 Symbolic Process for Architecture Generation

[00:50:33] 8.2 Logarithmic Relationship of Compute and Accuracy

[00:52:20] 8.3 Learning New Building Blocks for Future Tasks

9. AI Reasoning and Future Development

[00:53:15] 9.1 Consciousness as a Self-Consistency Mechanism in Iterative Reasoning

[00:56:30] 9.2 Reconciling Symbolic and Connectionist Views

[01:00:13] 9.3 System 2 Reasoning - Awareness and Consistency

[01:03:05] 9.4 Novel Problem Solving, Abstraction, and Reusability

10. Program Synthesis and Research Lab

[01:05:53] 10.1 François Leaving Google to Focus on Program Synthesis

[01:09:55] 10.2 Democratizing Programming and Natural Language Instruction

11. Frontier Models and O1 Architecture

[01:14:38] 11.1 Search-Based Chain of Thought vs. Standard Forward Pass

[01:16:55] 11.2 o1’s Natural Language Program Generation and Test-Time Compute Scaling

[01:19:35] 11.3 Logarithmic Gains with Deeper Search

12. ARC Evaluation and Human Intelligence

[01:22:55] 12.1 LLMs as Guessing Machines and Agent Reliability Issues

[01:25:02] 12.2 ARC-2 Human Testing and Correlation with g-Factor

[01:26:16] 12.3 Closing Remarks and Future Directions

SHOWNOTES PDF:

https://www.dropbox.com/scl/fi/ujaai0ewpdnsosc5mc30k/CholletNeurips.pdf?rlkey=s68dp432vefpj2z0dp5wmzqz6&st=hazphyx5&dl=0

1 Listener

How Do AI Models Actually Think? - Laura Ruis

Machine Learning Street Talk (MLST)

01/20/25 • 78 min

Laura Ruis, a PhD student at University College London and researcher at Cohere, explains her groundbreaking research into how large language models (LLMs) perform reasoning tasks, the fundamental mechanisms underlying LLM reasoning capabilities, and whether these models primarily rely on retrieval or develop procedural knowledge.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments.

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. Are you interested in working on reasoning, or getting involved in their events?

Goto https://tufalabs.ai/

***

TOC

1. LLM Foundations and Learning

1.1 Scale and Learning in Language Models [00:00:00]

1.2 Procedural Knowledge vs Fact Retrieval [00:03:40]

1.3 Influence Functions and Model Analysis [00:07:40]

1.4 Role of Code in LLM Reasoning [00:11:10]

1.5 Semantic Understanding and Physical Grounding [00:19:30]

2. Reasoning Architectures and Measurement

2.1 Measuring Understanding and Reasoning in Language Models [00:23:10]

2.2 Formal vs Approximate Reasoning and Model Creativity [00:26:40]

2.3 Symbolic vs Subsymbolic Computation Debate [00:34:10]

2.4 Neural Network Architectures and Tensor Product Representations [00:40:50]

3. AI Agency and Risk Assessment

3.1 Agency and Goal-Directed Behavior in Language Models [00:45:10]

3.2 Defining and Measuring Agency in AI Systems [00:49:50]

3.3 Core Knowledge Systems and Agency Detection [00:54:40]

3.4 Language Models as Agent Models and Simulator Theory [01:03:20]

3.5 AI Safety and Societal Control Mechanisms [01:07:10]

3.6 Evolution of AI Capabilities and Emergent Risks [01:14:20]

REFS:

[00:01:10] Procedural Knowledge in Pretraining & LLM Reasoning

Ruis et al., 2024

https://arxiv.org/abs/2411.12580

[00:03:50] EK-FAC Influence Functions in Large LMs

Grosse et al., 2023

https://arxiv.org/abs/2308.03296

[00:13:05] Surfaces and Essences: Analogy as the Core of Cognition

Hofstadter & Sander

https://www.amazon.com/Surfaces-Essences-Analogy-Fuel-Thinking/dp/0465018475

[00:13:45] Wittgenstein on Language Games

https://plato.stanford.edu/entries/wittgenstein/

[00:14:30] Montague Semantics for Natural Language

https://plato.stanford.edu/entries/montague-semantics/

[00:19:35] The Chinese Room Argument

David Cole

https://plato.stanford.edu/entries/chinese-room/

[00:19:55] ARC: Abstraction and Reasoning Corpus

François Chollet

https://arxiv.org/abs/1911.01547

[00:24:20] Systematic Generalization in Neural Nets

Lake & Baroni, 2023

https://www.nature.com/articles/s41586-023-06668-3

[00:27:40] Open-Endedness & Creativity in AI

Tim Rocktäschel

https://arxiv.org/html/2406.04268v1

[00:30:50] Fodor & Pylyshyn on Connectionism

https://www.sciencedirect.com/science/article/abs/pii/0010027788900315

[00:31:30] Tensor Product Representations

Smolensky, 1990

https://www.sciencedirect.com/science/article/abs/pii/000437029090007M

[00:35:50] DreamCoder: Wake-Sleep Program Synthesis

Kevin Ellis et al.

https://courses.cs.washington.edu/courses/cse599j1/22sp/papers/dreamcoder.pdf

[00:36:30] Compositional Generalization Benchmarks

Ruis, Lake et al., 2022

https://arxiv.org/pdf/2202.10745

[00:40:30] RNNs & Tensor Products

McCoy et al., 2018

https://arxiv.org/abs/1812.08718

[00:46:10] Formal Causal Definition of Agency

Kenton et al.

https://arxiv.org/pdf/2208.08345v2

[00:48:40] Agency in Language Models

Sumers et al.

https://arxiv.org/abs/2309.02427

[00:55:20] Heider & Simmel’s Moving Shapes Experiment

https://www.nature.com/articles/s41598-024-65532-0

[01:00:40] Language Models as Agent Models

Jacob Andreas, 2022

https://arxiv.org/abs/2212.01681

[01:13:35] Pragmatic Understanding in LLMs

Ruis et al.

https://arxiv.org/abs/2210.14986

1 Listener

Nicholas Carlini (Google DeepMind)

Machine Learning Street Talk (MLST)

01/25/25 • 81 min

Nicholas Carlini from Google DeepMind offers his view of AI security, emergent LLM capabilities, and his groundbreaking model-stealing research. He reveals how LLMs can unexpectedly excel at tasks like chess and discusses the security pitfalls of LLM-generated code.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments.

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. Are you interested in working on reasoning, or getting involved in their events?

Goto https://tufalabs.ai/

***

Transcript: https://www.dropbox.com/scl/fi/lat7sfyd4k3g5k9crjpbf/CARLINI.pdf?rlkey=b7kcqbvau17uw6rksbr8ccd8v&dl=0

TOC:

1. ML Security Fundamentals

[00:00:00] 1.1 ML Model Reasoning and Security Fundamentals

[00:03:04] 1.2 ML Security Vulnerabilities and System Design

[00:08:22] 1.3 LLM Chess Capabilities and Emergent Behavior

[00:13:20] 1.4 Model Training, RLHF, and Calibration Effects

2. Model Evaluation and Research Methods

[00:19:40] 2.1 Model Reasoning and Evaluation Metrics

[00:24:37] 2.2 Security Research Philosophy and Methodology

[00:27:50] 2.3 Security Disclosure Norms and Community Differences

3. LLM Applications and Best Practices

[00:44:29] 3.1 Practical LLM Applications and Productivity Gains

[00:49:51] 3.2 Effective LLM Usage and Prompting Strategies

[00:53:03] 3.3 Security Vulnerabilities in LLM-Generated Code

4. Advanced LLM Research and Architecture

[00:59:13] 4.1 LLM Code Generation Performance and O(1) Labs Experience

[01:03:31] 4.2 Adaptation Patterns and Benchmarking Challenges

[01:10:10] 4.3 Model Stealing Research and Production LLM Architecture Extraction

REFS:

[00:01:15] Nicholas Carlini’s personal website & research profile (Google DeepMind, ML security) - https://nicholas.carlini.com/

[00:01:50] CentML AI compute platform for language model workloads - https://centml.ai/

[00:04:30] Seminal paper on neural network robustness against adversarial examples (Carlini & Wagner, 2016) - https://arxiv.org/abs/1608.04644

[00:05:20] Computer Fraud and Abuse Act (CFAA) – primary U.S. federal law on computer hacking liability - https://www.justice.gov/jm/jm-9-48000-computer-fraud

[00:08:30] Blog post: Emergent chess capabilities in GPT-3.5-turbo-instruct (Nicholas Carlini, Sept 2023) - https://nicholas.carlini.com/writing/2023/chess-llm.html

[00:16:10] Paper: “Self-Play Preference Optimization for Language Model Alignment” (Yue Wu et al., 2024) - https://arxiv.org/abs/2405.00675

[00:18:00] GPT-4 Technical Report: development, capabilities, and calibration analysis - https://arxiv.org/abs/2303.08774

[00:22:40] Historical shift from descriptive to algebraic chess notation (FIDE) - https://en.wikipedia.org/wiki/Descriptive_notation

[00:23:55] Analysis of distribution shift in ML (Hendrycks et al.) - https://arxiv.org/abs/2006.16241

[00:27:40] Nicholas Carlini’s essay “Why I Attack” (June 2024) – motivations for security research - https://nicholas.carlini.com/writing/2024/why-i-attack.html

[00:34:05] Google Project Zero’s 90-day vulnerability disclosure policy - https://googleprojectzero.blogspot.com/p/vulnerability-disclosure-policy.html

[00:51:15] Evolution of Google search syntax & user behavior (Daniel M. Russell) - https://www.amazon.com/Joy-Search-Google-Master-Information/dp/0262042878

[01:04:05] Rust’s ownership & borrowing system for memory safety - https://doc.rust-lang.org/book/ch04-00-understanding-ownership.html

[01:10:05] Paper: “Stealing Part of a Production Language Model” (Carlini et al., March 2024) – extraction attacks on ChatGPT, PaLM-2 - https://arxiv.org/abs/2403.06634

[01:10:55] First model stealing paper (Tramèr et al., 2016) – attacking ML APIs via prediction - https://arxiv.org/abs/1609.02943

1 Listener

Sepp Hochreiter - LSTM: The Comeback Story?

Machine Learning Street Talk (MLST)

02/12/25 • 67 min

Sepp Hochreiter, the inventor of LSTM (Long Short-Term Memory) networks – a foundational technology in AI. Sepp discusses his journey, the origins of LSTM, and why he believes his latest work, XLSTM, could be the next big thing in AI, particularly for applications like robotics and industrial simulation. He also shares his controversial perspective on Large Language Models (LLMs) and why reasoning is a critical missing piece in current AI systems.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments. Check out their super fast DeepSeek R1 hosting!

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. They are hiring a Chief Engineer and ML engineers. Events in Zurich.

Goto https://tufalabs.ai/

***

TRANSCRIPT AND BACKGROUND READING:

https://www.dropbox.com/scl/fi/n1vzm79t3uuss8xyinxzo/SEPPH.pdf?rlkey=fp7gwaopjk17uyvgjxekxrh5v&dl=0

Prof. Sepp Hochreiter

https://www.nx-ai.com/

https://x.com/hochreitersepp

https://scholar.google.at/citations?user=tvUH3WMAAAAJ&hl=en

TOC:

1. LLM Evolution and Reasoning Capabilities

[00:00:00] 1.1 LLM Capabilities and Limitations Debate

[00:03:16] 1.2 Program Generation and Reasoning in AI Systems

[00:06:30] 1.3 Human vs AI Reasoning Comparison

[00:09:59] 1.4 New Research Initiatives and Hybrid Approaches

2. LSTM Technical Architecture

[00:13:18] 2.1 LSTM Development History and Technical Background

[00:20:38] 2.2 LSTM vs RNN Architecture and Computational Complexity

[00:25:10] 2.3 xLSTM Architecture and Flash Attention Comparison

[00:30:51] 2.4 Evolution of Gating Mechanisms from Sigmoid to Exponential

3. Industrial Applications and Neuro-Symbolic AI

[00:40:35] 3.1 Industrial Applications and Fixed Memory Advantages

[00:42:31] 3.2 Neuro-Symbolic Integration and Pi AI Project

[00:46:00] 3.3 Integration of Symbolic and Neural AI Approaches

[00:51:29] 3.4 Evolution of AI Paradigms and System Thinking

[00:54:55] 3.5 AI Reasoning and Human Intelligence Comparison

[00:58:12] 3.6 NXAI Company and Industrial AI Applications

REFS:

[00:00:15] Seminal LSTM paper establishing Hochreiter's expertise (Hochreiter & Schmidhuber)

https://direct.mit.edu/neco/article-abstract/9/8/1735/6109/Long-Short-Term-Memory

[00:04:20] Kolmogorov complexity and program composition limitations (Kolmogorov)

https://link.springer.com/article/10.1007/BF02478259

[00:07:10] Limitations of LLM mathematical reasoning and symbolic integration (Various Authors)

https://www.arxiv.org/pdf/2502.03671

[00:09:05] AlphaGo’s Move 37 demonstrating creative AI (Google DeepMind)

https://deepmind.google/research/breakthroughs/alphago/

[00:10:15] New AI research lab in Zurich for fundamental LLM research (Benjamin Crouzier)

https://tufalabs.ai

[00:19:40] Introduction of xLSTM with exponential gating (Beck, Hochreiter, et al.)

https://arxiv.org/abs/2405.04517

[00:22:55] FlashAttention: fast & memory-efficient attention (Tri Dao et al.)

https://arxiv.org/abs/2205.14135

[00:31:00] Historical use of sigmoid/tanh activation in 1990s (James A. McCaffrey)

https://visualstudiomagazine.com/articles/2015/06/01/alternative-activation-functions.aspx

[00:36:10] Mamba 2 state space model architecture (Albert Gu et al.)

https://arxiv.org/abs/2312.00752

[00:46:00] Austria’s Pi AI project integrating symbolic & neural AI (Hochreiter et al.)

https://www.jku.at/en/institute-of-machine-learning/research/projects/

[00:48:10] Neuro-symbolic integration challenges in language models (Diego Calanzone et al.)

https://openreview.net/forum?id=7PGluppo4k

[00:49:30] JKU Linz’s historical and neuro-symbolic research (Sepp Hochreiter)

https://www.jku.at/en/news-events/news/detail/news/bilaterale-ki-projekt-unter-leitung-der-jku-erhaelt-fwf-cluster-of-excellence/

YT: https://www.youtube.com/watch?v=8u2pW2zZLCs

1 Listener

Want to Understand Neural Networks? Think Elastic Origami! - Prof. Randall Balestriero

Machine Learning Street Talk (MLST)

02/08/25 • 78 min

Professor Randall Balestriero joins us to discuss neural network geometry, spline theory, and emerging phenomena in deep learning, based on research presented at ICML. Topics include the delayed emergence of adversarial robustness in neural networks ("grokking"), geometric interpretations of neural networks via spline theory, and challenges in reconstruction learning. We also cover geometric analysis of Large Language Models (LLMs) for toxicity detection and the relationship between intrinsic dimensionality and model control in RLHF.

SPONSOR MESSAGES:

***

CentML offers competitive pricing for GenAI model deployment, with flexible options to suit a wide range of models, from small to large-scale deployments.

https://centml.ai/pricing/

Tufa AI Labs is a brand new research lab in Zurich started by Benjamin Crouzier focussed on o-series style reasoning and AGI. Are you interested in working on reasoning, or getting involved in their events?

Goto https://tufalabs.ai/

***

Randall Balestriero

https://x.com/randall_balestr

https://randallbalestriero.github.io/

Show notes and transcript: https://www.dropbox.com/scl/fi/3lufge4upq5gy0ug75j4a/RANDALLSHOW.pdf?rlkey=nbemgpa0jhawt1e86rx7372e4&dl=0

TOC:

Introduction

00:00:00: Introduction

Neural Network Geometry and Spline Theory

00:01:41: Neural Network Geometry and Spline Theory

00:07:41: Deep Networks Always Grok

00:11:39: Grokking and Adversarial Robustness

00:16:09: Double Descent and Catastrophic Forgetting

Reconstruction Learning

00:18:49: Reconstruction Learning

00:24:15: Frequency Bias in Neural Networks

Geometric Analysis of Neural Networks

00:29:02: Geometric Analysis of Neural Networks

00:34:41: Adversarial Examples and Region Concentration

LLM Safety and Geometric Analysis

00:40:05: LLM Safety and Geometric Analysis

00:46:11: Toxicity Detection in LLMs

00:52:24: Intrinsic Dimensionality and Model Control

00:58:07: RLHF and High-Dimensional Spaces

Conclusion

01:02:13: Neural Tangent Kernel

01:08:07: Conclusion

REFS:

[00:01:35] Humayun – Deep network geometry & input space partitioning

https://arxiv.org/html/2408.04809v1

[00:03:55] Balestriero & Paris – Linking deep networks to adaptive spline operators

https://proceedings.mlr.press/v80/balestriero18b/balestriero18b.pdf

[00:13:55] Song et al. – Gradient-based white-box adversarial attacks

https://arxiv.org/abs/2012.14965

[00:16:05] Humayun, Balestriero & Baraniuk – Grokking phenomenon & emergent robustness

https://arxiv.org/abs/2402.15555

[00:18:25] Humayun – Training dynamics & double descent via linear region evolution

https://arxiv.org/abs/2310.12977

[00:20:15] Balestriero – Power diagram partitions in DNN decision boundaries

https://arxiv.org/abs/1905.08443

[00:23:00] Frankle & Carbin – Lottery Ticket Hypothesis for network pruning

https://arxiv.org/abs/1803.03635

[00:24:00] Belkin et al. – Double descent phenomenon in modern ML

https://arxiv.org/abs/1812.11118

[00:25:55] Balestriero et al. – Batch normalization’s regularization effects

https://arxiv.org/pdf/2209.14778

[00:29:35] EU – EU AI Act 2024 with compute restrictions

https://www.lw.com/admin/upload/SiteAttachments/EU-AI-Act-Navigating-a-Brave-New-World.pdf

[00:39:30] Humayun, Balestriero & Baraniuk – SplineCam: Visualizing deep network geometry

https://openaccess.thecvf.com/content/CVPR2023/papers/Humayun_SplineCam_Exact_Visualization_and_Characterization_of_Deep_Network_Geometry_and_CVPR_2023_paper.pdf

[00:40:40] Carlini – Trade-offs between adversarial robustness and accuracy

https://arxiv.org/pdf/2407.20099

[00:44:55] Balestriero & LeCun – Limitations of reconstruction-based learning methods

https://openreview.net/forum?id=ez7w0Ss4g9

(truncated, see shownotes PDF)

1 Listener

Joscha Bach - Why Your Thoughts Aren't Yours.

Machine Learning Street Talk (MLST)

10/20/24 • 112 min

Dr. Joscha Bach discusses advanced AI, consciousness, and cognitive modeling. He presents consciousness as a virtual property emerging from self-organizing software patterns, challenging panpsychism and materialism. Bach introduces "Cyberanima," reinterpreting animism through information processing, viewing spirits as self-organizing software agents.

He addresses limitations of current large language models and advocates for smaller, more efficient AI models capable of reasoning from first principles. Bach describes his work with Liquid AI on novel neural network architectures for improved expressiveness and efficiency.

The interview covers AI's societal implications, including regulation challenges and impact on innovation. Bach argues for balancing oversight with technological progress, warning against overly restrictive regulations.

Throughout, Bach frames consciousness, intelligence, and agency as emergent properties of complex information processing systems, proposing a computational framework for cognitive phenomena and reality.

SPONSOR MESSAGE:

DO YOU WANT WORK ON ARC with the MindsAI team (current ARC winners)? MLST is sponsored by Tufa Labs: Focus: ARC, LLMs, test-time-compute, active inference, system2 reasoning, and more. Future plans: Expanding to complex environments like Warcraft 2 and Starcraft 2. Interested? Apply for an ML research position: benjamin@tufa.ai

TOC

[00:00:00] 1.1 Consciousness and Intelligence in AI Development

[00:07:44] 1.2 Agency, Intelligence, and Their Relationship to Physical Reality

[00:13:36] 1.3 Virtual Patterns and Causal Structures in Consciousness

[00:25:49] 1.4 Reinterpreting Concepts of God and Animism in Information Processing Terms

[00:32:50] 1.5 Animism and Evolution as Competition Between Software Agents

2. Self-Organizing Systems and Cognitive Models in AI

[00:37:59] 2.1 Consciousness as self-organizing software

[00:45:49] 2.2 Critique of panpsychism and alternative views on consciousness

[00:50:48] 2.3 Emergence of consciousness in complex systems

[00:52:50] 2.4 Neuronal motivation and the origins of consciousness

[00:56:47] 2.5 Coherence and Self-Organization in AI Systems

3. Advanced AI Architectures and Cognitive Processes

[00:57:50] 3.1 Second-Order Software and Complex Mental Processes

[01:01:05] 3.2 Collective Agency and Shared Values in AI

[01:05:40] 3.3 Limitations of Current AI Agents and LLMs

[01:06:40] 3.4 Liquid AI and Novel Neural Network Architectures

[01:10:06] 3.5 AI Model Efficiency and Future Directions

[01:19:00] 3.6 LLM Limitations and Internal State Representation

4. AI Regulation and Societal Impact

[01:31:23] 4.1 AI Regulation and Societal Impact

[01:49:50] 4.2 Open-Source AI and Industry Challenges

Refs in shownotes and MP3 metadata

Shownotes:

https://www.dropbox.com/scl/fi/g28dosz19bzcfs5imrvbu/JoschaInterview.pdf?rlkey=s3y18jy192ktz6ogd7qtvry3d&st=10z7q7w9&dl=0

1 Listener

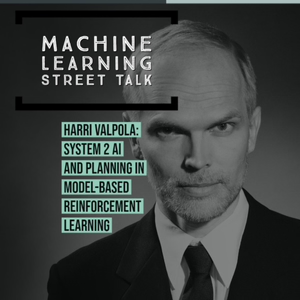

Harri Valpola: System 2 AI and Planning in Model-Based Reinforcement Learning

Machine Learning Street Talk (MLST)

05/25/20 • 98 min

In this episode of Machine Learning Street Talk, Tim Scarfe, Yannic Kilcher and Connor Shorten interviewed Harri Valpola, CEO and Founder of Curious AI. We continued our discussion of System 1 and System 2 thinking in Deep Learning, as well as miscellaneous topics around Model-based Reinforcement Learning. Dr. Valpola describes some of the challenges of modelling industrial control processes such as water sewage filters and paper mills with the use of model-based RL. Dr. Valpola and his collaborators recently published “Regularizing Trajectory Optimization with Denoising Autoencoders” that addresses some of the concerns of planning algorithms that exploit inaccuracies in their world models!

00:00:00 Intro to Harri and Curious AI System1/System 2

00:04:50 Background on model-based RL challenges from Tim

00:06:26 Other interesting research papers on model-based RL from Connor

00:08:36 Intro to Curious AI recent NeurIPS paper on model-based RL and denoising autoencoders from Yannic

00:21:00 Main show kick off, system 1/2

00:31:50 Where does the simulator come from?

00:33:59 Evolutionary priors

00:37:17 Consciousness

00:40:37 How does one build a company like Curious AI?

00:46:42 Deep Q Networks

00:49:04 Planning and Model based RL

00:53:04 Learning good representations

00:55:55 Typical problem Curious AI might solve in industry

01:00:56 Exploration

01:08:00 Their paper - regularizing trajectory optimization with denoising

01:13:47 What is Epistemic uncertainty

01:16:44 How would Curious develop these models

01:18:00 Explainability and simulations

01:22:33 How system 2 works in humans

01:26:11 Planning

01:27:04 Advice for starting an AI company

01:31:31 Real world implementation of planning models

01:33:49 Publishing research and openness

We really hope you enjoy this episode, please subscribe!

Regularizing Trajectory Optimization with Denoising Autoencoders: https://papers.nips.cc/paper/8552-regularizing-trajectory-optimization-with-denoising-autoencoders.pdf

Pulp, Paper & Packaging: A Future Transformed through Deep Learning: https://thecuriousaicompany.com/pulp-paper-packaging-a-future-transformed-through-deep-learning/

Curious AI: https://thecuriousaicompany.com/

Harri Valpola Publications: https://scholar.google.com/citations?user=1uT7-84AAAAJ&hl=en&oi=ao

Some interesting papers around Model-Based RL:

GameGAN: https://cdn.arstechnica.net/wp-content/uploads/2020/05/Nvidia_GameGAN_Research.pdf

Plan2Explore: https://ramanans1.github.io/plan2explore/

World Models: https://worldmodels.github.io/

MuZero: https://arxiv.org/pdf/1911.08265.pdf

PlaNet: A Deep Planning Network for RL: https://ai.googleblog.com/2019/02/introducing-planet-deep-planning.html

Dreamer: Scalable RL using World Models: https://ai.googleblog.com/2020/03/introducing-dreamer-scalable.html

Model Based RL for Atari: https://arxiv.org/pdf/1903.00374.pdf

Show more best episodes

Show more best episodes

Featured in these lists

FAQ

How many episodes does Machine Learning Street Talk (MLST) have?

Machine Learning Street Talk (MLST) currently has 215 episodes available.

What topics does Machine Learning Street Talk (MLST) cover?

The podcast is about Podcasts and Technology.

What is the most popular episode on Machine Learning Street Talk (MLST)?

The episode title 'Nicholas Carlini (Google DeepMind)' is the most popular.

What is the average episode length on Machine Learning Street Talk (MLST)?

The average episode length on Machine Learning Street Talk (MLST) is 95 minutes.

How often are episodes of Machine Learning Street Talk (MLST) released?

Episodes of Machine Learning Street Talk (MLST) are typically released every 5 days, 16 hours.

When was the first episode of Machine Learning Street Talk (MLST)?

The first episode of Machine Learning Street Talk (MLST) was released on Apr 24, 2020.

Show more FAQ

Show more FAQ